On-device AI: the new frontier for security and compliance

As companies accelerate AI adoption, a new governance challenge arises. While cloud-based AI offers scalability and rapid testing, it also introduces complexity related to data sovereignty, regulatory requirements, and security controls. Sensitive data leaves endpoints, moves across networks, and often stays in shared cloud environments. For industries with strict regulations, this creates tension between innovation and compliance.

A growing alternative is reshaping that balance: on-device AI. By moving inference workloads from centralized cloud platforms to intelligent endpoints, organizations can reduce exposure, impact data governance, and regain architectural control, all without sacrificing AI-driven productivity.

Cloud AI and the governance challenge

Cloud AI excels at large-scale model training and centralized analytics. However, inference, the phase where AI interacts directly with users and data, often requires real-time processing of sensitive information. In fields such as healthcare, finance, legal services, and public administration, transferring data off-device can raise compliance issues.

Inference workloads must also balance availability, latency, cost, and regulatory requirements while carefully managing performance, energy efficiency, and compliance constraints, especially when workloads span diverse compute and storage environments.

For industries that require strict compliance, edge or on-device inference can reduce data transfer and help lower regulatory risk. When models process data locally, organizations can avoid unnecessary data sharing across jurisdictions, cut down on ingress and egress costs, and better control attack surfaces. In other words, governance is not just a matter of policy but also of architecture.

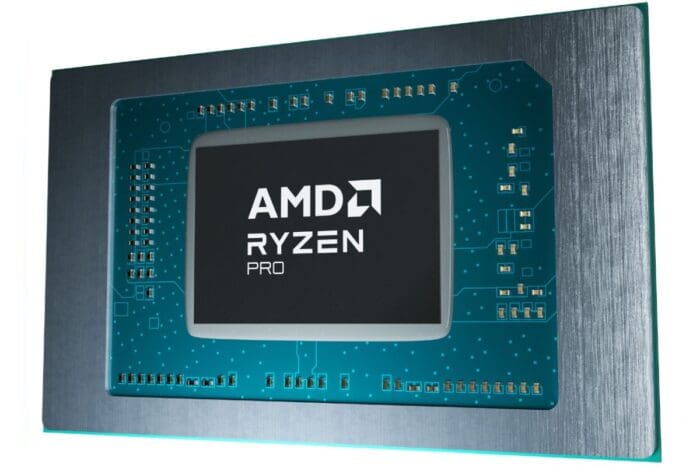

The rise of the NPU

At the core of modern on-device AI is the Neural Processing Unit (NPU), a specialized processor designed to speed up AI tasks locally. Unlike CPUs, which handle general-purpose computing, or GPUs, which focus on parallel graphics and AI acceleration, NPUs are specifically optimized for efficient, low-power AI inference. According to a recent IDC

1 study, 83% of information technology decision-makers agree that the main expected impact of AI PCs, all equipped with NPUs, is the removal of repetitive tasks.

Modern AI PCs with NPUs, such as those powered by AMD Ryzen™ AI PRO processors, can handle an increasing number of AI workloads on-device, helping reduce reliance on cloud processing.

This architectural shift offers potential benefits:

- Lower data exposure: Sensitive data remains on the device rather than being transmitted externally.

- Reduced latency: Real-time AI experiences, such as meeting summaries and live transcription, operate instantly.

- Operational resilience: AI capabilities continue functioning even in low-connectivity environments.

- Cost control: Reducing reliance on cloud compute instances lowers recurring inference costs.

For enterprise IT leaders, the NPU is more than just a performance boost. It serves as a strategic governance tool, allowing organizations to manage where AI workloads run and reduce dependence on external infrastructure.

Compliance-sensitive industries

In general, inference requires less computation than training, but unlike training, inference isn’t a single stage; it runs continuously in production environments, making it subject to stricter compliance standards.

For industries with strict regulatory frameworks—such as financial services governance, healthcare privacy, and data residency requirements—moving inference closer to the user offers clear strategic benefits. Local processing helps ensure data localization compliance, reduces third-party exposure, enhances auditability, and lowers the risk of cross-border regulatory violations.

In healthcare, for example, AI-assisted diagnostics on secure endpoints can analyze patient data without transmitting full records to external clouds. In finance, local fraud detection algorithms can evaluate transactions in real time while minimizing regulatory complexity.

Productivity without compromise

The adoption of AI PCs is accelerating across enterprises, as a recent AMD report finds that most IT decision-makers plan to adopt them in the near term. The motivation isn’t just about productivity gains; it’s about balancing innovation with control.

AI-powered features such as intelligent meeting transcription, context-aware productivity assistants, AI-enhanced collaboration tools, and developer assistance workflows can now run locally on dedicated NPUs, reducing potential cloud exposure while maintaining performance.

This hybrid architecture, cloud for training and endpoint for inference, allows enterprises to separate innovation from risk.

A unified approach to on-device AI

On-device AI is not a rejection of the cloud, but an architectural evolution. While cloud infrastructure remains essential for large-scale training and centralized analytics, inference is increasingly shifting closer to users and sensitive data. AMD Ryzen™ AI PRO processors are designed to support that shift across endpoints and data centers.

AMD Ryzen™ AI PRO processors integrate dedicated AMD XDNA™ Architecture NPUs delivering up to 55 TOPS of AI performance, alongside high-performance CPUs and GPUs in a hybrid architecture optimized for enterprise workloads. This enables efficient local inference, reduced cloud dependency, and low latency without compromising performance.

Notably, AI acceleration is combined with AMD PRO Technologies, which include hardware-based security features like Memory Guard and Microsoft Pluton integration, helping strengthen governance, data protection, and enterprise manageability. In data center settings, AMD expands this approach with AMD EPYC™ server CPUS and AMD Instinct™ GPUs, enabling consistency between training infrastructure and endpoint inference.

As regulatory scrutiny intensifies and data control becomes a strategic priority, the AMD integrated approach spanning silicon, security features, and scalability, positions AI not just as a performance upgrade, but as a governed, enterprise-ready capability built for sustainable deployment.

1 IDC White Paper, sponsored by AMD, Accelerate Your Organization’s AI Strategy by Deploying High-Performance AI PCs, #US53192925, March 2025