From training to inference: Why AI workloads challenge traditional data centers

In 2022, just before AI entered the zeitgeist, the IEA estimated that data centers consumed 240-340 TWh of energy, about 1-1.3% of global electricity consumption. This is a substantial sum, yet new infrastructure is proliferating everywhere, from the United States and China to the Middle East. This is not just because AI is increasingly important, but also because data centers are not all equal, and much of the legacy infrastructure is ill-suited for AI.

Artificial intelligence is often discussed as if it were one unified capability. In reality, it is a lifecycle that progresses from data preparation to model training and, finally, to inference. Each phase imposes fundamentally different demands on infrastructure. Traditional enterprise data centers were not designed to support this level of diversity in compute intensity and data movement; therefore, new capabilities are required.

Data pipelines: the first bottleneck

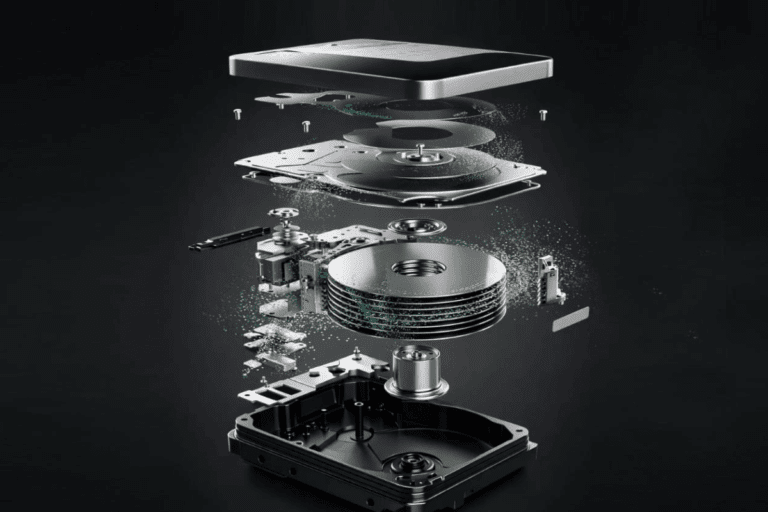

Training is typically considered the first stage of building AI models, but even before it can begin, vast amounts of data must be ingested and prepared. These datasets can be enormous, with LLMs often requiring hundreds of terabytes or even petabytes of processed training data. Thus, legacy storage and networking layers can become bottlenecks before compute resources are fully utilized.

Legacy storage systems struggle to sustain the data access patterns and throughput required by AI workloads. Older hard drives fall short of delivering the performance required for high-throughput, parallel data access, while insufficient network bandwidth slows ingestion and preprocessing. In many cases, what appears to be a compute limitation is actually a data pipeline constraint. Without high-performance storage and fast interconnects, even powerful accelerators sit idle.

Training: the HPC phase

Training is where the AI models really take shape, and it’s by far the most compute-intensive stage of the AI lifecycle. It requires massive parallel processing, typically delivered by high-end GPUs operating in clusters. The scale can be extreme, with the more powerful models being trained using tens of thousands of GPUs for days or even weeks. Such requirements resemble those of high-performance computing more than those of traditional enterprise IT.

Beyond raw compute, training workloads consume substantial energy and generate significant heat, necessitating advanced cooling and power management strategies. Conventional CPU-centric architectures are not optimized for this level of sustained, parallel workload intensity.

Inference: a different set of demands

Although training is highly important, once a model is trained, the infrastructure priorities shift. Inference, where the model starts creating real value, is typically less computationally intensive per operation, but it runs continuously in production.

Here, focus shifts toward latency, availability, and efficiency. Applications such as fraud detection or conversational AI require near-instant responses and must scale dynamically to handle fluctuating demand.

While training is mainly done on GPU clusters, inference often benefits from specialized accelerators. The objective is no longer maximum parallelism but rather consistent responsiveness at a controlled cost.

Aligning infrastructure with the AI lifecycle

If AI exposes the structural limits of legacy data centers, the way forward is not incremental tuning but architectural reinvention. As outlined in the “Rearchitecting data centers for AI workloads” white paper, published by AMD, the optimal course of action is aligning infrastructure with each phase of the AI lifecycle, from high-throughput data ingestion to massively parallel training and latency-sensitive inference. That alignment requires purpose-built compute, integrated software, and a coherent strategy across the stack.

For enterprises ready to move beyond experimentation, this means adopting heterogeneous architectures anchored by high-performance chips such as AMD EPYC™ server CPUs and AMD Instinct™ GPUs. AMD EPYC™ CPUs provide the high core density, memory bandwidth, and I/O capacity required to orchestrate data pipelines and manage complex, distributed workloads. AMD Instinct GPUs deliver the parallel performance and efficiency essential for large-scale model training, helping reduce time-to-insight while maintaining energy discipline.

Equally important is unifying these capabilities through a software layer, the AMD Enterprise AI Suite. This integrates optimized frameworks, workload orchestration, and deployment tooling into a production-ready ecosystem. Together, these technologies enable organizations to right-size inference environments, dynamically allocate resources, and maintain compliance across hybrid and edge deployments.

AI is no longer a discrete project; it is becoming a permanent operating model. Enterprises that rearchitect around scalable, energy-efficient platforms may be the best positioned to run AI workloads and industrialize them.